5.8 提交MapReduce Job到hadoop集群

2016-03-14 15:29:24

4,520

3

通常情况下,对于一个MapReduce Job,在开发的时候,我们都是直接在本地进行调试。

但是在生产环境下,我们会将编写的MapReduce Job打成一个jar包,上传到hadoop集群中运行。

对于打包过程,因为我们的项目中已经使用了maven,因此只要输入"mvn package"即可以完成打包操作。不过我们需要考虑的是:

1、如果我们的job中使用到了某些jar hadoop集群环境环境中并没有包含,当我们把job提交之后肯定会报错。因此我们需要将使用到的第三方依赖也打包进我们的jar中。

2、因为mapreduce依赖的hadoop相关的jar,在hadoop集群环境中已经提供了,因此对于这些依赖,我们应该在pom.xml中将其scope范围设置为provided。

一、修改pom.xml

我们可以通过maven-assembly

..... <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.6.0-cdh5.4.7</version> <scope>provided</scope> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.6.0-cdh5.4.7</version> <scope>provided</scope> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-app</artifactId> <version>2.6.0-cdh5.4.7</version> <scope>provided</scope> </dependency> .... <plugins> <plugin> <artifactId> maven-assembly-plugin</artifactId> <configuration> <descriptorRefs> <descriptorRef>jar-with-dependencies</descriptorRef > </descriptorRefs> <archive> <manifest> <mainClass>mapreduce.WordCount</mainClass> </manifest> </archive> </configuration> </plugin> </plugins>

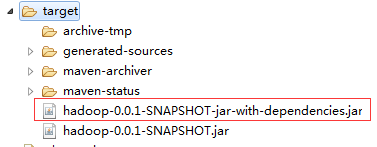

此时,我们通过"mvn assembly:assembly"命令进行打包,打包之后我们,可以在target目录下看到两个打包好的jar文件:

包含后缀"jar-with-dependencies"的jar,即是包含了其他依赖的打包后的jar。

提交jar文件到hadoop集群中

命令格式:

hadoop jar xxx.jar mainclass args

在我们的案例中,为

hadoop jar hadoop-0.0.1-SNAPSHOT-jar-with-dependencies.jar mapreduce.WordCount

提交之后,控制台输出:

[hadoop@iZ28csbxcf3Z target]$ hadoop jar hadoop-0.0.1-SNAPSHOT.jar mapreduce.WordCount .... 16/03/14 13:54:21 INFO client.RMProxy: Connecting to ResourceManager at /115.28.65.149:8050 ... 16/03/14 13:54:23 INFO input.FileInputFormat: Total input paths to process : 1 16/03/14 13:54:23 INFO mapreduce.JobSubmitter: number of splits:1 16/03/14 13:54:24 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1457170855988_0002 16/03/14 13:54:25 INFO impl.YarnClientImpl: Submitted application application_1457170855988_0002 16/03/14 13:54:25 INFO mapreduce.Job: The url to track the job: http://iZ28csbxcf3Z:8088/proxy/application_1457170855988_0002/ 16/03/14 13:54:25 INFO mapreduce.Job: Running job: job_1457170855988_0002 16/03/14 13:54:43 INFO mapreduce.Job: Job job_1457170855988_0002 running in uber mode : false 16/03/14 13:54:43 INFO mapreduce.Job: map 0% reduce 0% 16/03/14 13:54:54 INFO mapreduce.Job: map 100% reduce 0% 16/03/14 13:55:16 INFO mapreduce.Job: map 100% reduce 50% 16/03/14 13:55:17 INFO mapreduce.Job: map 100% reduce 100% #执行完成 16/03/14 13:55:18 INFO mapreduce.Job: Job job_1457170855988_0002 completed successfully 16/03/14 13:55:18 INFO mapreduce.Job: Counters: 50 File System Counters FILE: Number of bytes read=43 FILE: Number of bytes written=327231 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=128 HDFS: Number of bytes written=19 HDFS: Number of read operations=9 HDFS: Number of large read operations=0 HDFS: Number of write operations=4 Job Counters Launched map tasks=1 Launched reduce tasks=2 Data-local map tasks=1 Total time spent by all maps in occupied slots (ms)=9495 Total time spent by all reduces in occupied slots (ms)=37355 Total time spent by all map tasks (ms)=9495 Total time spent by all reduce tasks (ms)=37355 Total vcore-seconds taken by all map tasks=9495 Total vcore-seconds taken by all reduce tasks=37355 Total megabyte-seconds taken by all map tasks=9722880 Total megabyte-seconds taken by all reduce tasks=38251520 Map-Reduce Framework Map input records=2 Map output records=4 Map output bytes=35 Map output materialized bytes=43 Input split bytes=109 Combine input records=4 Combine output records=3 Reduce input groups=3 Reduce shuffle bytes=43 Reduce input records=3 Reduce output records=3 Spilled Records=6 Shuffled Maps =2 Failed Shuffles=0 Merged Map outputs=2 GC time elapsed (ms)=683 CPU time spent (ms)=3360 Physical memory (bytes) snapshot=422203392 Virtual memory (bytes) snapshot=8189263872 Total committed heap usage (bytes)=275517440 Custom Group Sensitive words=2 Shuffle Errors BAD_ID=0 CONNECTION=0 IO_ERROR=0 WRONG_LENGTH=0 WRONG_MAP=0 WRONG_REDUCE=0 File Input Format Counters Bytes Read=19 File Output Format Counters Bytes Written=19